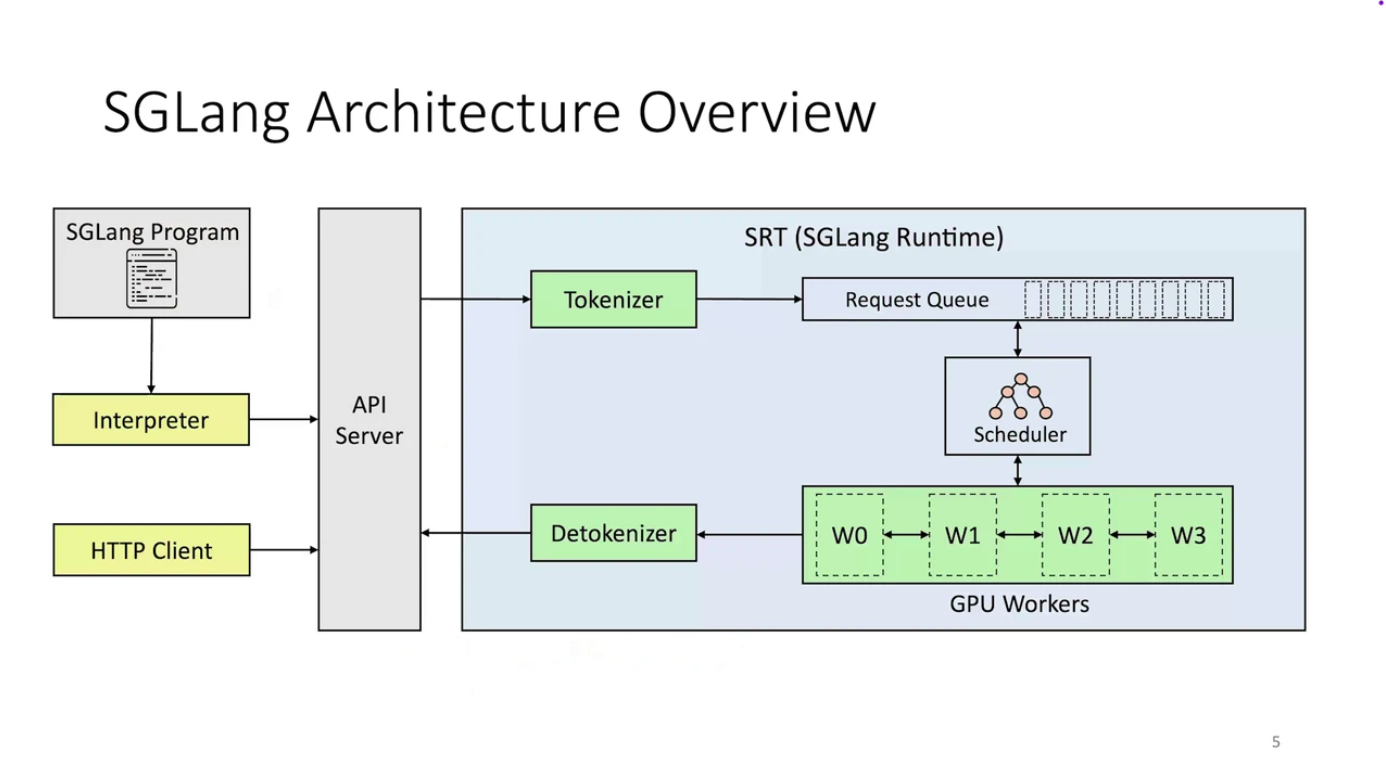

SGLang Architecture

SGLang isn’t just a domain-specific language (DSL). It’s a complete, integrated execution system with a clear separation of functionality:| Layer | What it does | Why it matters |

|---|---|---|

| Frontend | Where you define your LLM logic (with gen, fork, join, etc.) | This keeps your code clean, readable, and your workflows easily reusable. |

| Backend | Where SGLang intelligently figures out how to run your logic most efficiently. | This is where the speed, scalability, and optimized inference truly come to life. |

| Primitive | What it does | Example |

|---|---|---|

gen() | Generates a text span | gen("title", stop="\n") |

fork() | Splits execution into multiple branches | For parallel sub-tasks |

join() | Merges branches back together | For combining outputs |

select() | Chooses one option from many | For controlled logic, like multiple choice |

| Feature | Traditional Engines (vLLM, TGI) | SGLang |

|---|---|---|

| Programming Model | Sequential API calls with manual prompt chaining | Native structured logic with gen(), fork(), join(), select() |

| Memory Management | Basic KV caching, often discarded between calls | RadixAttention: Intelligent prefix-aware cache reuse (up to 6x faster) |

| Output Control | Hope and pray for correct formatting | Compressed FSMs: Guaranteed structured output (JSON, XML, etc.) |

| Parallel Processing | Manual batching and coordination | Built-in fork() and join() for parallel execution |

| Performance | Standard inference optimization | PyTorch-native with torch.compile(), quantization, sparse inference |

Tutorial

Step 1: Project Setup

Create the project structure:Step 2: Configure Dependencies

The VLM is Qwen3-VL-30B-A3B-Instruct-FP8, which requires significant GPU memory. Thecerebrium.toml defines the environment, hardware, and scaling settings. This configuration uses an ADA_L40 GPU and includes:

- Hardware settings for GPU, CPU, and memory allocation

- Scaling parameters to control instance counts

- Required pip packages: SGLang, flashinfer (the chosen backend), and PyTorch

- APT system dependencies

- FastAPI server configuration for hosting the API

cerebrium.toml with:

Step 3: Implement the Ad Analysis Logic

Cerebrium does not enforce any special class design or application architecture — write Python code as if running locally. The code below sets up the SGLang Runtime Engine (Backend) with FastAPI and loads the model on container startup. The first request incurs a model load, but subsequent requests execute instantaneously. In yourmain.py file:

fork, which runs many prompts in parallel and brings the results together. This enables many simultaneous evaluations with no increase in total latency. The results are then structured in a specific format for the response.

Step 4: Deploy Your Application

Run:

Example Response

fork() for parallel processing and SGLang’s built-in output control.

You can find the complete code for this tutorial in our examples repository.